AI Model Size and Growth

AI Model Size and Growth

Artificial Intelligence (AI) has rapidly evolved over the past few years, becoming a core part of modern technology. Understanding how AI models are measured and how quickly they are growing is essential. This article explains AI model size, how it is measured, and how AI has progressed compared to five years ago.

What is AI Model Size?

AI model size refers to the scale or capacity of a machine learning model. In simple terms, it indicates how much information the model can store and process. Larger models generally have more parameters, enabling them to learn complex patterns and perform advanced tasks such as language translation, image recognition, and content generation.

For example, modern AI systems like language models can understand context, generate human-like text, and even assist in programming—all due to their large model sizes.

How is AI Model Size Measured?

AI model size is primarily measured using the following key factors:

Number of Parameters

Parameters are the internal variables of a model that are learned during training. These include weights and biases in neural networks. The number of parameters is the most common way to measure model size.

- Small models: Thousands to millions of parameters

- Medium models: Millions to billions of parameters

- Large models: Hundreds of billions or even trillions of parameters

Model Storage Size

This refers to how much disk space the model occupies, usually measured in megabytes (MB), gigabytes (GB), or terabytes (TB). Larger models require more storage and computational resources.

Computational Requirements

Model size is also indirectly measured by the computational power required to train and run it. This includes GPU/TPU usage, memory consumption, and inference speed.

Training Data Size

While not a direct measure, the amount of data used to train a model often correlates with its size and performance. Larger models typically require massive datasets.

Why Does Model Size Matter?

The size of an AI model impacts:

- Accuracy: Larger models can capture more complex patterns.

- Performance: They often perform better on diverse tasks.

- Resource Usage: Bigger models require more hardware and energy.

- Deployment: Smaller models are easier to deploy on devices like smartphones.

AI Growth Rate Compared to 5 Years Ago

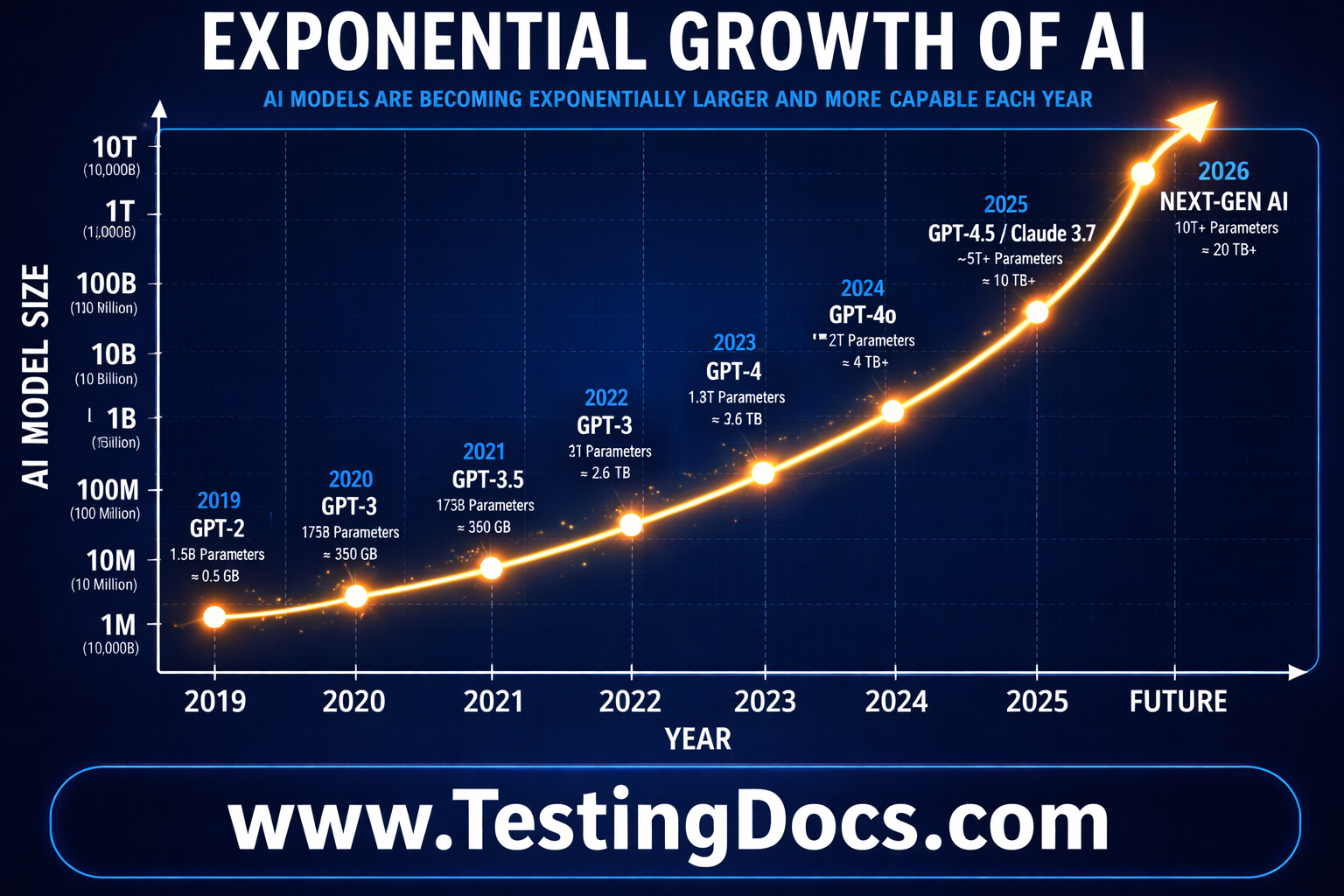

The growth of AI over the past five years has been extraordinary. Around 2020, most advanced AI models had millions to a few billion parameters. Today, models have grown to hundreds of billions or even trillions of parameters.

Growth Trends

- Exponential Increase in Parameters: Model sizes have increased by over 100x in some cases.

- Improved Hardware: GPUs and specialized AI chips have become more powerful and accessible.

- Better Algorithms: Advances in neural network architectures have improved efficiency and performance.

- Wider Applications: AI is now used in healthcare, finance, education, and entertainment.

Comparison: 5 years ago, vs Now

| 5 Years Ago (2020) | Today (2025+) | |

|---|---|---|

| Model Size | Millions to billions of parameters | Hundreds of billions to trillions |

| Hardware | Limited GPU usage | Advanced GPUs, TPUs, and AI accelerators |

| Applications | Basic NLP and image tasks | Advanced generative AI, automation, and reasoning |

| Accessibility | Mostly research labs | Available via APIs and cloud platforms |

Challenges of Growing AI Models

While larger models offer better capabilities, they also introduce challenges:

- High computational cost

- Increased energy consumption

- Environmental impact

- Difficulty in deployment on low-resource devices

Future of AI Model Growth

The future of AI is not just about making models bigger, but also smarter and more efficient. Researchers are focusing on:

- Model optimization and compression

- Efficient training techniques

- Edge AI for smaller devices

- Responsible and ethical AI development

AI model size is a fundamental concept that determines the capability and performance of machine learning systems. Over the past five years, the growth in AI has been exponential, driven by advancements in computing power and algorithms. For IT students, understanding these concepts is crucial for building a strong foundation in AI and staying relevant in this rapidly evolving field.