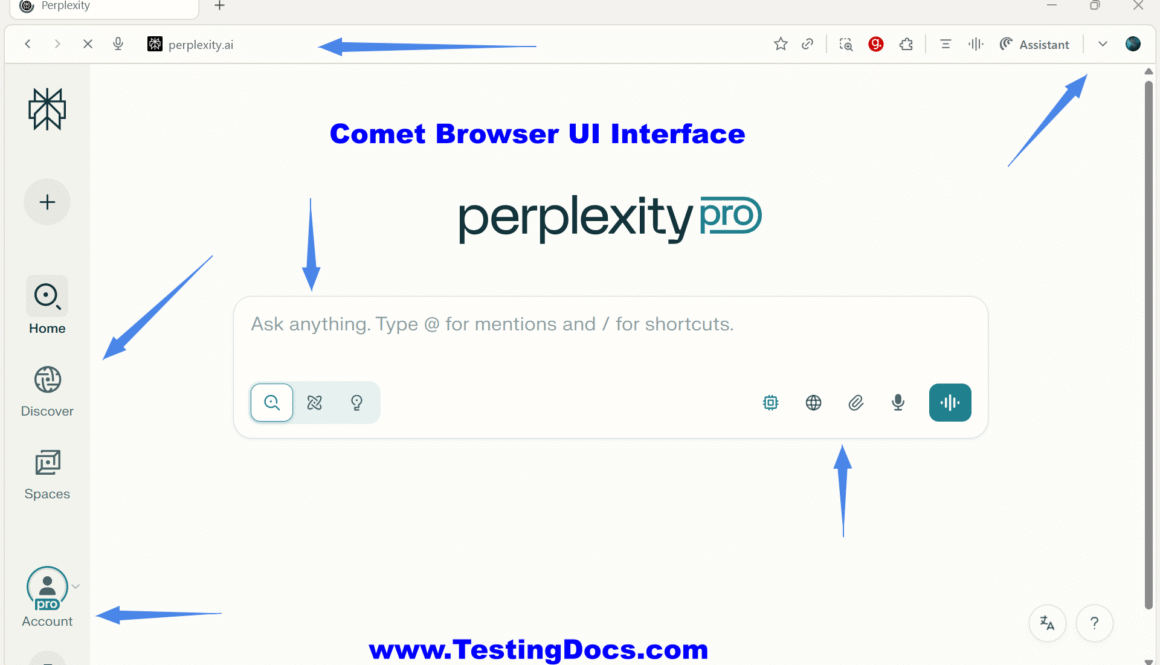

Comet Browser UI Interface

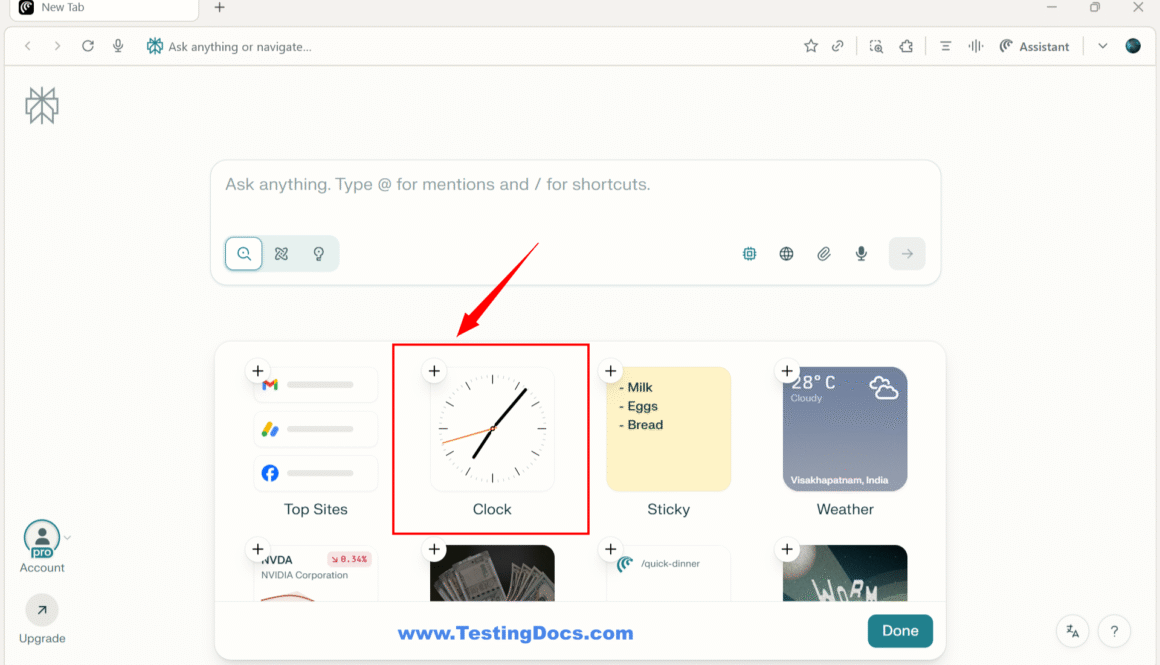

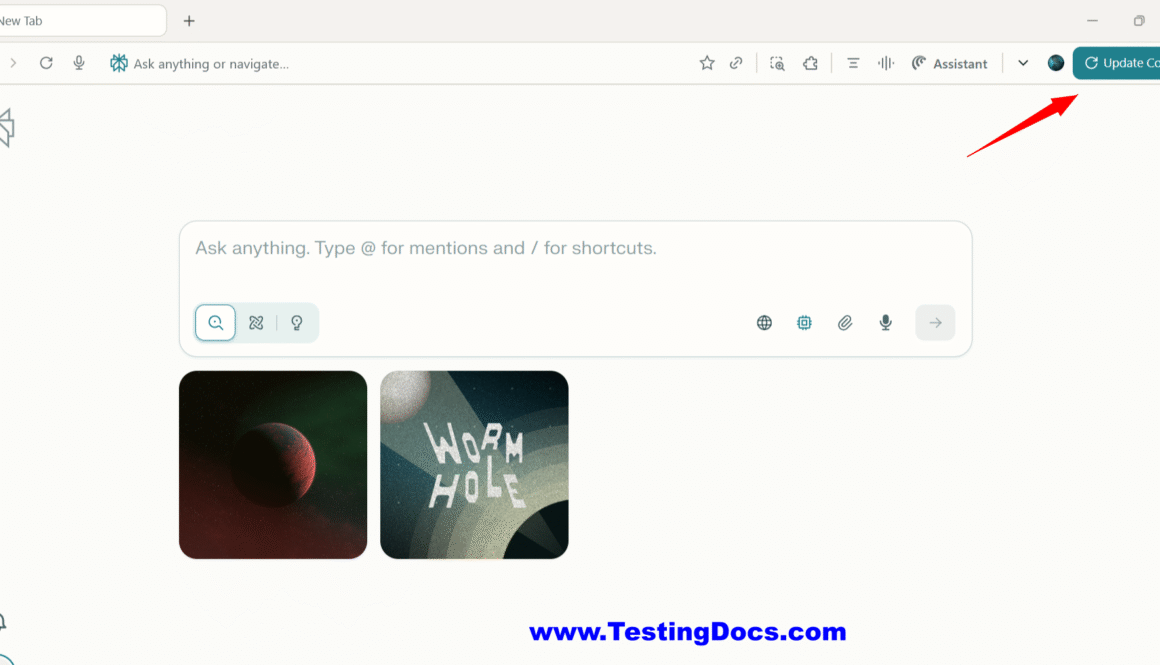

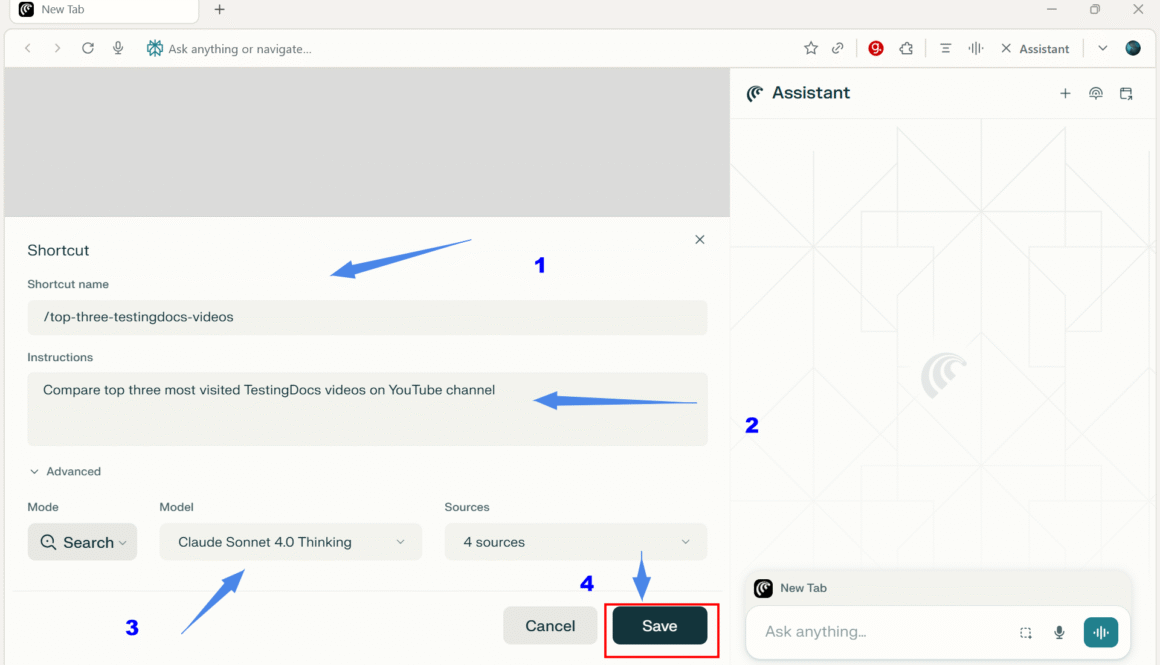

Comet Browser UI Interface The Comet Browser, developed by Perplexity, features a AI-native Interface designed to transform traditional web browsing into an intelligent, conversational, and proactive experience. Comet UI Elements The most common browser UI elements are as follows: UI Element Description / Function Address Bar (Omnibox) + “Voice Mode” Button Enter URLs or natural-language queries; […]