Adversarial Testing

Adversarial Testing

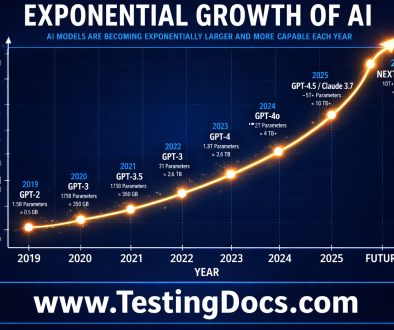

Adversarial testing ( or red teaming) is a cybersecurity technique used to evaluate the effectiveness of a system’s security measures by simulating the tactics, methods, and procedures that real-world attackers might employ. The goal is to identify vulnerabilities and weaknesses in the system’s defenses before malicious actors can exploit them.

Adversarial testing involves breaking the system under test with harmful, malicious inputs simulating real-world threats. It involves creating scenarios that mimic the tactics of actual attackers. This could include exploiting known vulnerabilities, using social engineering techniques, or employing advanced persistent threats.

Example

Adversarial testing evaluates an AI model to learn how it behaves when provided with maliciously harmful inputs. These harmful inputs to the GenAI model are called Adversarial queries. They are likely to cause a model to fail in an unsafe manner.

More information can be found here.